News

From bold ideas to real assessment change: What we heard at the Universities Australia Solutions Summit

Share with colleagues

Download the full Case Study

Take an in-depth look at how educators have used Cadmus to better deliver assessments.

Last week in Canberra, the Universities Australia Solutions Summit lived up to its theme: big ideas, bold conversations, real solutions.

Compared to last year, the mood felt different. There was less anxiety about enrolment volatility and a more deliberate return to the core business of universities: teaching and learning. Assessment wasn’t framed primarily as a compliance issue or a risk-management exercise. Instead, it sat inside a broader conversation about academic work, student capability, and the civic role of higher education.

Across the three days, a clear throughline emerged: the sector is moving from reaction to recalibration.

Here are the themes that stood out, both on stage and in conversations with university leaders throughout the Summit.

1. A deliberate pivot back to learning

The keynote conversation, “Teaching and learning: reimagining learning for the next generation,” featuring Professor Mary O'Kane AC and Luke Sheehy, set the tone early.

The focus wasn’t disruption for disruption’s sake. It was the nature of academic work and how it must evolve to provide meaningful learning experiences in the face of structural, technological, and societal change.

There was a noticeable shift away from prestige narratives and toward core questions:

- What are students actually learning?

- How are academic roles evolving?

- What does quality look like in an AI-enabled world?

It felt like a conscious recalibration: back to learning as the organising principle.

This also reflected a broader assessment trend: leaders are moving past early whiplash reactions to AI and asking, “What are we actually trying to develop in students?” Assessment design is increasingly purpose-driven, layering thoughtful design, process evidence, clear policy, and proportionate consequences—reframing assessment as a tool for learning, not just a compliance check.

2. AI isn’t the disruption. Uncertainty (and fatigue) is.

Very few leaders are still debating whether generative AI belongs in higher education. That conversation has largely moved on.

What surfaced instead was ambiguity fatigue: not resistance, but exhaustion. From unclear expectations, shifting guidance, and policy moving faster than practice. The challenge now isn’t technological adoption. It’s institutional clarity.

Assessment design is central to that clarity. Leaders spoke less about “locking down” tasks and more about layered assurance: thoughtful design, visible process evidence, proportionate policy settings, and transparent expectations around AI use.

AI literacy is now part of the core work. Embedding generative AI meaningfully across programs, supporting responsible use, and building student capability is the focus, rather than treating AI as a threat.

The shift is subtle but significant: from securing assessment to assuring learning.

3. Group work: from contribution disputes to capability design

Group assessment emerged as a live topic, particularly following commentary from Julian Leeser. But the more strategic conversations moved beyond the familiar problem of freeloading.

There was growing recognition that many group work challenges are design issues, not student deficits. Too often, institutions intervene at the end of the process—redistributing marks, calculating contribution percentages, or mediating conflict once it has escalated. At the Summit, discussion focused upstream:

- Clarifying roles and expectations from the outset

- Structuring milestones and visible checkpoints

- Building accountability into the process

- Supporting conflict management before breakdown occurs

- Making contribution visible through structured process evidence

In an AI-enabled environment, that matters even more. Collaborative assessment can strengthen authenticity and transparency, but only if designed intentionally.

4. Universities as democratic institutions

Beyond assessment design, leaders reflected on the broader civic role of universities. One of the most thought-provoking sessions, “Robust and respectful democracy: trust, belonging and challenge on university campuses,” featured Bill Shorten, Dr Susan Carland, and Professor Sharon Pickering.

The premise was clear: universities are foundational pillars of democracy. Places where evidence is interrogated, ideas are debated, and students learn to challenge and be challenged.

But that role carries tension. Across the Summit, conversations surfaced issues of racism, antisemitism, Islamophobia, cultural safety, and social cohesion. The underlying question wasn’t simply about free speech policy. It was about how universities foster rigorous debate while also fostering belonging.

One attendee reflected: “It’s all about how you build dialogue, rather than one-upmanship, and creating a safe space to do it.” Universities, by their nature, operate on a longer horizon and are uniquely positioned to cultivate sustained, respectful dialogue across difference.

While AI remained a dominant topic, this theme of social conscience and democratic responsibility ran just as prominently throughout the conference.

5. Integrity and equity are complementary

A recurring theme across conversations was the rejection of a false binary between integrity and inclusion.

Rather than positioning integrity as enforcement and equity as flexibility, university leaders framed well-designed assessment as strengthening both, particularly when expectations are explicit and systems carry more of the compliance burden than individual academics.

Authentic, clearly scaffolded tasks can:

- Reduce ambiguity

- Make responsible AI use explicit

- Strengthen engagement

- Increase transparency of contribution

This felt like a more mature narrative.

Integrity is not a bolt-on compliance layer; it is embedded in inclusive, high-quality design.

6. The integrity “arms race” is real

New cheating technologies—AI-enabled wearables, covert assistive tools—are forcing universities to rethink exam security. Leaders are mindful of privacy and proportionality: identity checks can’t create more problems than they solve.

Institutions are exploring multiple layers of assurance beyond high-stakes surveillance, including process evidence, thoughtful assessment design, and integrated programmatic approaches, to uphold integrity without over-relying on punitive measures.

7. Oral assessment is back

Alongside these broader integrity strategies, vivas and short oral follow-ups are emerging as an extra assurance layer for long-form work.

Oral assessment complements written tasks and process evidence, providing an additional check on understanding and authenticity, particularly in complex or collaborative assessment contexts. It’s a targeted tool that supports both integrity and richer student learning.

8. Toward integrated, programmatic assessment ecosystems

Institutions are simplifying tech stacks and building diverse, balanced assessment models, a “mosaic” approach:

- Secured assessment where it makes sense

- Collaborative and authentic assessment where it strengthens learning

The aim is a coherent, programmatic assessment ecosystem that supports graduate outcomes, student capability, and academic work, while reflecting institutional values.

9. Less crisis, more alignment

Perhaps the most noticeable change from previous years was tone.

There was less crisis language and less fixation on volatility. In its place was a steadier focus on coherence and long-term direction.

From early conversations about the purpose of the university, democratic responsibility, regulation, and social licence, it was clear that institutions are thinking more deeply about their role in society.

That framing carried through the following days.

Day Two’s discussions of Australia’s economic and technological transformation positioned universities as capability builders, not just research engines. Day Three’s focus on skills and global partnerships reinforced that curriculum and assessment sit directly inside the national productivity and social cohesion agenda.

Across all three days, the message was consistent.

The sector does not appear to be searching for silver bullets.

It is looking for alignment.

Alignment between learning outcomes and assessment design.

Alignment between policy and practice.

Alignment between institutional purpose and civic responsibility.

From bold ideas to practical change

If there was one overarching takeaway, it is this: the conversation is maturing.

Assessment is no longer framed solely as a risk to manage in the age of AI. It is being re-centred as a strategic lever—for learning, for integrity, and for the kind of democratic engagement universities exist to support.

The opportunity now is not to generate more noise. It is to translate this recalibration into practical design choices that support academic work and student capability in a socially complex, AI-enabled environment.

Category

Academic Integrity

Assessment Design

Teaching & Learning

Student Success

More News

Load more

Product

How we use data at Cadmus

Being online creates new opportunities to understand how learning happens. Discover how Cadmus uses data to support learning, improve assessment design, and build transparency and trust across every stage of the assessment process.

Cadmus

2026-03-31

Academic Integrity

Leadership

Why detection-first integrity strategies are becoming a sunk cost for universities

In this article, Founder & Co-CEO Herk Kailis puts language to a growing tension across institutions: as the market leans further into detection, the response risks becoming more reactive than strategic. Through a commercial lens, he explores how this is beginning to resemble a classic sunk cost trap, and why the stronger long-term play is to design assessments that are harder to game in the first place.

Herk Kailis, Founder & Co-CEO, Cadmus

2026-03-30

Product update

Company

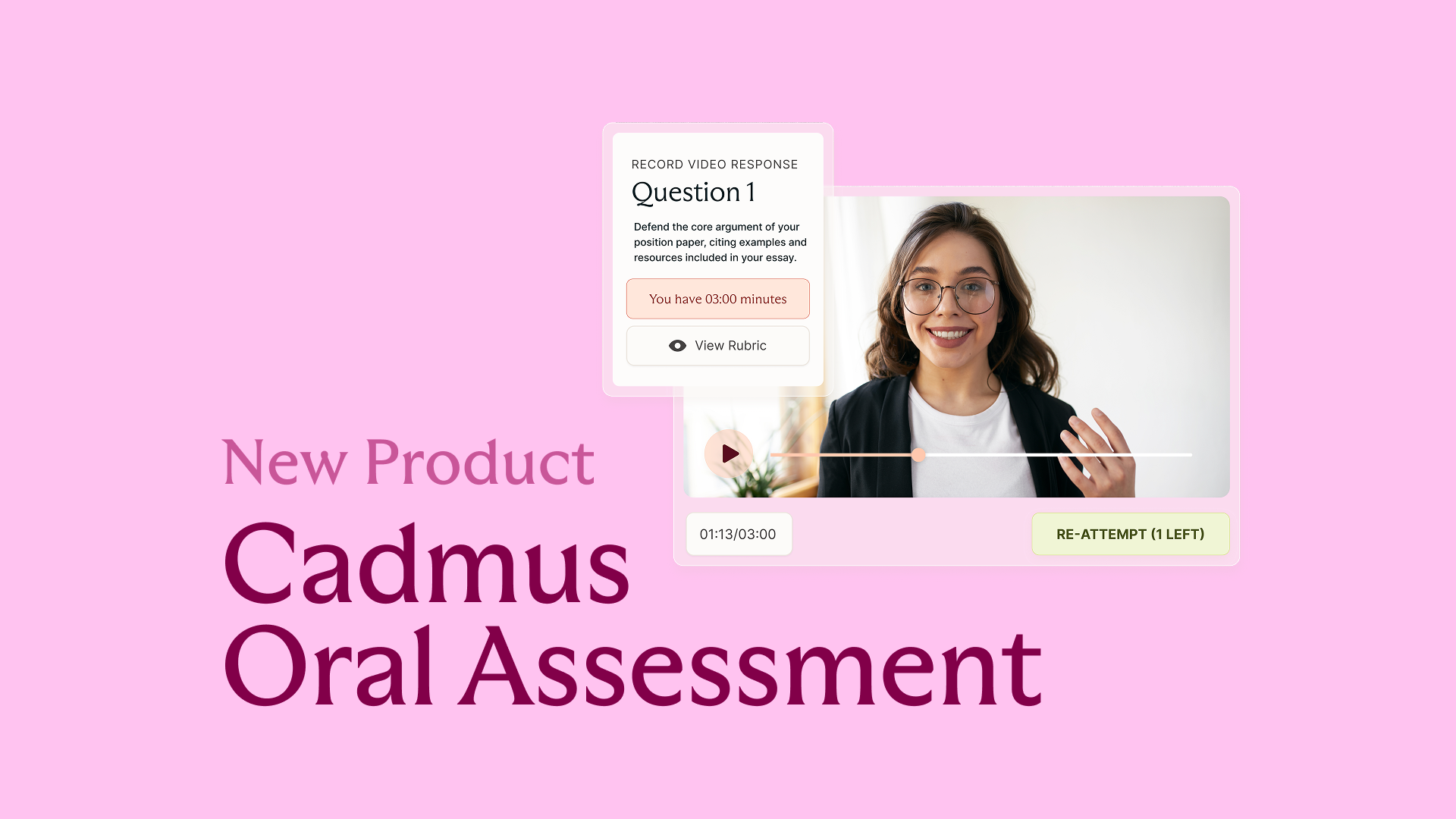

Introducing Cadmus Oral Assessment: Scalable assurance for an AI‑enabled world

Cadmus introduces Oral Assessment: a new approach to strengthening assessment assurance in an AI-enabled world. Starting with scalable, asynchronous vivas, this phased solution embeds targeted oral explanation directly into assessment workflows to provide more credible evidence of student learning.

2026-03-19