News

What a design-led model of academic integrity actually looks like in practice

Share with colleagues

Download the full Case Study

Take an in-depth look at how educators have used Cadmus to better deliver assessments.

"Every system is perfectly designed to get the results it gets."

The Problem Is Not the Students

This paper begins with an uncomfortable idea.

If your students are submitting AI-generated work, copy-pasted essays, and contracted assignments at scale, the primary diagnosis is not a values failure. It is a design failure.

This is not an easy statement for universities to sit with. The instinct, when confronted with widespread academic misconduct, is to first look at the students: their effort, their ethics, their relationship with learning. Then to reach for policy, detection, and consequence.

But look more carefully at the system producing those outcomes, and a different story emerges.

When students submit work they didn't produce, they are — almost always — responding rationally to the incentive structure in front of them. They are completing a task that feels disconnected from any real-world consequence. They are managing the cognitive and time demands of a system that simultaneously expects high performance and provides minimal scaffolding. They are optimising for the grade signal, because the grade signal is what the system has told them matters.

W. Edwards Deming, the quality theorist who rebuilt Japanese manufacturing after World War II, offered the observation that hangs above this entire argument: every system is perfectly designed to get the results it gets. If you want different outcomes, you redesign the system. Deming's insight transformed industrial manufacturing. It is directly applicable to academic assessment — and yet higher education, as a sector, has not made the leap.

This paper is about what it looks like when institutions do.

What We Built, and What It Produces

To understand what design-led integrity requires, it helps to understand, clearly and without defensiveness, what the current assessment system was actually built to do — and why it produces the outcomes it does.

Modern university assessment architecture was largely constructed in an era defined by three constraints: large cohort sizes, limited staff time, and the need for defensible standardisation. The dominant model that emerged — a small number of high-stakes summative tasks, often culminating in a final examination — was not designed to optimise for learning. It was designed to produce a grade distribution that could be defended administratively.

John Biggs, the Australian educational psychologist whose constructive alignment framework has shaped curriculum design globally for three decades, identified the core problem with precision. When learning outcomes, teaching activities, and assessment tasks are not coherently aligned, students adapt. They learn not what you intended them to learn, but what they need to perform in order to pass. This is not a character failing. It is a predictable response to misaligned design.

The assessment-as-event model compounds this. When the majority of a student's grade rests on a single submission or examination, the stakes of that single event become extreme. High-stakes, low-frequency assessment creates the conditions for misconduct in the same way that any high-pressure, low-oversight system creates the conditions for shortcuts. This pattern is well established: when the cost of failure is high and the probability of being caught is uncertain, risk-taking behaviour increases. We have built, in other words, an assessment architecture that is behaviourally optimised for misconduct.

This was a manageable problem when the tools available for misconduct were limited. In an era of handwritten examinations and physical libraries, the practical difficulty of cheating imposed its own friction. Generative AI has removed that friction entirely. The same task that once took hours to fabricate can now be produced in seconds. The tools have changed. The architecture has not. And so what was a latent design flaw has become an acute crisis.

Trying to detect and penalise your way out of a design problem is like trying to catch water in a net. You can expend enormous energy. You will not solve the problem.

The question worth asking — the question this paper is about — is what a different design produces.

What TEQSA Is Actually Telling Us

TEQSA, Australia's higher education quality regulator, has been clear on this point.

In its 2020 Good Practice Note on Academic Integrity, TEQSA placed assessment design at the centre of its integrity framework. The guidance was explicit: institutions should adopt a developmental, whole-of-institution approach that prioritises the educational context — including how assessment is designed — rather than focusing primarily on detection and punishment. That guidance preceded the generative AI wave. It was already pointing in the right direction.

By 2023, following the emergence of ChatGPT and its rapid adoption by students globally, TEQSA's guidance became more explicit. Its updated advice on AI and academic integrity directed institutions to review assessment design as a primary response, not a secondary one. The regulator identified several design principles as protective factors: tasks that are personalised and contextualised, that require students to demonstrate process and not just product, that incorporate staged components rather than single submissions, and that are explicitly aligned to the development of graduate capabilities.

Read carefully, TEQSA is not asking institutions to do something extraordinary. It is asking them to implement what the assessment design literature has been recommending for decades. The regulator has arrived at the same place the researchers arrived years ago. The question is whether institutions are ready to follow.

What TEQSA's framework also makes visible is that academic integrity is not a standalone compliance concern. It is nested within the quality of the educational experience. An institution that has strong assessment design has strong integrity outcomes — not because it has made cheating harder to execute, but because it has made learning more genuinely demanded. Integrity is, in this framing, a quality signal.

When integrity problems are widespread, they are not an anomaly to be suppressed. They are a diagnostic — telling you something important about the quality of the learning environment you have built.

This is a profound reframe for institutional governance. Instead of asking 'how do we catch more students?', the governing question becomes 'what does our assessment tell us about the learning environment we have built?' That is a question that belongs at the DVC-Academic or Education level, at Academic Board, at the centre of institutional strategy — not with the academic integrity team alone.

The Intellectual Foundations — What the Research Has Known for Years

The research base for design-led integrity is substantial. It predates the AI conversation by decades. Understanding it matters, because it grounds the design principles in something more durable than a response to the current technology moment.

David Boud has spent his career documenting the gap between what assessment is designed to measure and what it actually produces. Boud's argument is simple: if you want students who can think independently, you have to design assessment that demands independent thinking, not just its simulation.

In Defending Assessment Security in a Digital World, Phillip Dawson draws a crucial distinction between assessment security — making cheating technically difficult — and assessment validity — making tasks so genuinely demanding of real learning that they resist circumvention by design. His argument is that security without validity is futile, because sufficiently motivated students will always find a technical workaround. Validity is the deeper protection.

Dylan Wiliam's work on formative assessment adds another layer. Wiliam's central finding — replicated across hundreds of studies — is that assessment designed to inform and develop learning produces significantly better outcomes than assessment designed only to measure and grade it. The cheating impulse is, in significant part, a disengagement signal. Formative-rich assessment reduces disengagement.

The parallel framework that clarifies the design logic most powerfully comes not from education research but from privacy law. Ann Cavoukian, the former Information and Privacy Commissioner of Ontario, developed the concept of Privacy by Design in the 1990s — the principle that privacy protection should be built into systems architecturally, not bolted on as a compliance layer after the fact. Her seven foundational principles translate with striking directness to academic integrity.

Replace 'privacy' with 'integrity' in Cavoukian's framework and you have the architecture of this model. Integrity by design. Not by detection.

The Universal Design for Learning framework adds a final dimension. UDL argues that designing for the full range of learners — for variability in how people process and express understanding — produces better outcomes for all learners, not just those with identified needs. Assessment designed to accommodate genuine variability in how students demonstrate understanding is harder to game, more equitable, and more educationally valid at the same time. These are not competing goods. They are the same design objective.

Five Principles of Design-Led Academic Integrity

From the research base and from the practical experience of working with institutions attempting this shift, five design principles emerge as foundational. These are not a checklist. They are interdependent — each reinforces the others, and the absence of any one weakens the whole.

Principle 1: Contextualisation

The most powerful integrity protection to assessment design is specificity. A task that is genuinely specific — to the institution, the course, the cohort, the moment — cannot be meaningfully outsourced, because there is no external provider with the necessary context to complete it.

Generic assessment tasks — the five-paragraph essay on a general topic, the case study drawn from a textbook — are easy to set and easy to mark. They are also easy to fabricate. The design investment required to make a task genuinely contextualised pays for itself many times over in reduced misconduct, reduced investigation burden, and improved validity as a measure of real learning.

Contextualisation can be achieved through locally-relevant case material, through tasks that require students to apply concepts to their own professional or personal contexts, through assessment briefs that incorporate current events or recent course discussions, and through designs that require students to build on their own prior work. Each of these choices makes the task personal. Personalisation is integrity by design.

Principle 2: Progression

Assessment designed as a single event at the end of a learning sequence is structurally fragile. It concentrates risk at a single point, creates maximum pressure, and provides no opportunity to observe the process of learning — only its claimed product.

Assessment designed as a progression — a series of connected, staged tasks that build on each other over the duration of a course — is structurally robust. It distributes risk across multiple points. It makes the trajectory of a student's understanding visible. It creates natural opportunities to verify that earlier work is genuinely the student's own, because later work builds directly upon it.

Progression-based assessment is not merely an integrity mechanism. It is better pedagogy. The research on spaced practice and retrieval is unambiguous: learning distributed over time, with multiple opportunities to apply and build on understanding, produces more durable knowledge than massed, single-event learning. Designing progressive assessment means designing for integrity and for learning quality at once — a double dividend that makes the investment worthwhile.

Principle 3: Authentic Verification

There is a meaningful difference between surveillance and verification. Surveillance is premised on distrust — it watches for bad behaviour. Verification is premised on learning — it seeks evidence that genuine capability exists.

Design-led integrity builds in natural moments of verification: not as gotcha mechanisms, but as opportunities for students to demonstrate, in a live or interactive context, that the understanding they have submitted actually exists in their heads. A brief oral component attached to a written submission. A presentation that requires students to respond to questions about their own work. A reflective component that asks students to articulate their reasoning process.

TEQSA's updated guidance on AI integrity specifically identifies oral and interactive components as protective design features. The reason is clear: it is not yet possible to outsource a real-time demonstration of understanding. The conversation, the question, the moment of spontaneous reasoning — these are hard to fabricate. And they are, incidentally, among the most educationally valuable experiences a student can have.

Principle 4: Transparent Purpose

Students who understand what they are developing, why it matters, and how the assessment connects to their actual future capability are meaningfully less likely to circumvent it. This is not a moral observation. It is a motivational one.

Research on self-determination theory, developed by Deci and Ryan, identifies three conditions that produce intrinsic motivation: autonomy, competence, and relatedness — the experience of connection to something that matters. Assessment that is designed to be transparently purposeful activates all three. Assessment that feels arbitrary, disconnected, or purely administrative activates none.

Transparent purpose requires explicit curriculum design: not just 'here is the task' but 'here is what this task is developing in you, here is how it connects to your graduate capability, here is why this matters in the world you are entering.' This upstream investment in curriculum coherence has substantial downstream integrity benefits that are rarely captured in institutional reporting.

Principle 5: Proportionality

Not all assessment tasks carry the same integrity risk. A first-year introductory essay on a well-covered topic carries very different risk to a capstone project requiring three years of accumulated disciplinary knowledge. Design-led integrity applies protective features proportionally — not uniformly.

Applying maximum security to every assessment task regardless of risk is not only resource-inefficient; it signals pervasive distrust to students and academics alike. It creates the adversarial culture that makes integrity conversations harder and less productive. Proportional design, by contrast, applies thoughtful protection where it is genuinely warranted and builds trust where the risk is manageable — treating students as honest by default, which the research consistently shows they are, while maintaining appropriate rigour at the points where it genuinely matters.

What It Looks Like in Practice

Principles without practice remain theoretical. The following scenarios show what each of these design shifts looks like in practice — not as abstract ideals, but as concrete alternatives to current norms.

FROM SINGLE SUBMISSION TO STAGED PORTFOLIO

A third-year business ethics course requires a 2,500-word essay on corporate social responsibility, submitted at the end of semester. The task is generically framed. It can be, and frequently is, fabricated using AI tools with minimal modification.

Redesigned, the same learning outcomes are assessed through four connected components across the semester. Week three: a 400-word written identification of an integrity failure in a current Australian business case the student has chosen, with a brief oral explanation to their tutorial group. Week seven: a 600-word structural analysis incorporating frameworks introduced in weeks one to six. Week ten: a peer-review exchange assessed on the quality of critique provided. Week thirteen: a 1,500-word final synthesis that explicitly builds on and responds to the feedback received in week ten.

Each component requires the previous one. The oral component in week three creates a baseline understanding that later work must be consistent with. The peer review creates social accountability. The synthesis requires engagement with feedback that was personally directed. The trajectory of the student's thinking across the semester is visible. The task is, in practice, impossible to outsource without extraordinary effort — effort that, for most students, exceeds the effort of simply doing the work.

FROM GENERIC TO CONTEXTUALISED BRIEF

A first-year nursing course assesses clinical reasoning through a case study drawn from a textbook published in 2018. The patient, the clinical context, the questions asked — all of it is available online, including pre-written responses on study-sharing platforms.

Redesigned, the case study is drawn from a de-identified patient scenario contributed by the clinical placement partner in the current academic year. The questions incorporate references to specific skills practised in this cohort's laboratory sessions. One question asks students to reflect on a moment from their own placement observation where the clinical reasoning described in the case would have applied. The task cannot be answered without the experiences specific to this cohort's learning journey. It is, by design, non-transferable.

FROM AI-PROHIBITED TO AI-INTEGRATED

An academic writing course bans the use of AI tools entirely. The ban is effectively unenforceable, detection is unreliable, and the prohibition creates an adversarial relationship with a tool students will use throughout their professional lives.

Redesigned, the task requires students to use an AI tool to generate an initial draft on the assigned topic, then to substantially revise it — documenting their revision decisions with tracked changes and written justifications for each significant edit — and submit a reflective commentary on what the AI got wrong, what it got right, and what the gap between the generated text and their own revised version reveals about their developing understanding of the subject.

This task cannot be completed without genuine critical engagement with both the AI output and the subject matter. The submission is inherently personal — no two students' revision decisions or reflections will be the same. And the task teaches something true about how AI tools actually work and what human judgment adds to them. The integrity protection and the pedagogical value are, again, the same design choice.

The System Architecture That Makes This Possible

Individual task redesign is necessary. It is not sufficient.

Design-led integrity at institutional scale requires a supporting system architecture — not a series of heroic individual efforts by motivated academics, but an infrastructure that makes good assessment design the path of least resistance. This is the systems challenge that most integrity reform efforts underestimate.

The Curriculum Layer

Where assessment is designed — in course development, in annual review, in the curriculum mapping that connects tasks to graduate capabilities. This layer needs to be supported by assessment design expertise: not compliance officers, but educators and curriculum designers who can work alongside academics proactively. The model is consultation before misconduct, not investigation after it.

The Pedagogical Layer

Where students are prepared for what assessment demands. A student facing a design-led assessment without preparation in reflective writing, self-assessment, or oral communication will struggle — not because the task is unfair, but because the skills it demands have not been explicitly taught. Design-led integrity requires visible, scaffolded development of the capabilities that authentic assessment demands. This is the UDL principle applied: design in capability development, not accommodation after the fact.

The Verification Layer

Where natural demonstration moments are built in. This does not require a return to mass examinations. It requires thoughtful integration of brief, purposeful, interactive components — the oral attached to the written, the presentation that follows the submission, the tutorial discussion that assumes the reading was genuinely done. At scale, this is operationally challenging. But the challenge is more tractable than the growing resource cost of detection, investigation, and adjudication.

The Data Layer

Where learning analytics inform early intervention. Design-led integrity does not mean being blind to risk. It means responding to early signals of disengagement — drops in formative participation, inconsistencies in writing across submissions, sudden shifts in capability — with support, not surveillance. The universities doing this well are using analytics not to build misconduct cases but to trigger outreach: a tutor check-in, an academic skills referral, a pastoral care conversation. The diagnostic function of assessment data is an integrity tool. It is currently underused.

The Governance Layer

Where policy enables rather than prohibits. Academic integrity policy that is primarily punitive creates cultures of fear and concealment. Policy that is primarily developmental — framing misconduct as a learning opportunity where possible, investing in capability-building before punitive escalation, setting expectations around assessment design as clearly as it sets expectations around student behaviour — creates cultures of transparency. TEQSA's developmental framing is governance guidance. Institutions that have updated their policy architecture to reflect it report meaningfully better outcomes on the metrics that matter.

The Honest Challenges

Intellectual honesty requires naming the genuine difficulties. This is not a cost-free transition, and treating it as one does not serve the institutions trying to make it.

Scale is real. Authentic, design-led assessment is more resource-intensive to develop and, in many modalities, to mark. The institutions navigating this most successfully are those that have made an explicit resource decision: investing upstream in curriculum design and reducing the downstream cost of investigation and adjudication. The accounting is not straightforward, but the direction of the trade-off is clear.

Consistency is genuinely harder to achieve. Standardised rubrics applied to generic tasks are easier to calibrate than complex judgments about the quality of a personalised portfolio. Moderation processes need to evolve. This is not a reason to abandon design-led assessment. It is a reason to invest in marker development and calibration — which are, in any case, good academic practices regardless of integrity considerations.

Academic autonomy is a legitimate value. Design-led integrity requires a level of curriculum coordination and assessment oversight that some academics experience as intrusion. Managing this requires genuine consultation, demonstrated evidence of educational benefit, and institutional leadership that frames the shift as a professional development investment rather than a compliance imposition.

Change management takes time. The research base is clear. The capability to implement is available. The gap is institutional will and implementation infrastructure. Institutions that are serious about this shift are treating it as a five-year programme, not a policy update — building assessment design capacity, piloting in engaged faculties, documenting outcomes, and building the evidence base that earns broader adoption.

The Pattern That Connects All of This

I want to step back from the practical to the structural, because there is a pattern running through everything described in this paper that is worth naming explicitly.

In every case where the current integrity approach fails, the failure has the same shape: a system that treats a design problem as a behaviour problem, and responds with monitoring rather than architecture.

The same pattern appears in product design: the safest manufactured products are not the ones with the most prominent warning labels. They are the ones where failure modes have been designed out — where the product cannot easily be misused because the design makes misuse inconvenient, counterintuitive, or structurally impossible.

The same principle applies here. Academic assessment is more secure when the learning it is supposed to evidence cannot be easily separated from the task itself. Design-led integrity closes that gap — not perfectly, not once and for all, but structurally and systematically, in a way that compounds in value over time rather than depreciating.

A Different Possible Future

I want to end with what is possible, because the sector has been so absorbed in the crisis framing that it has lost sight of what this transition actually enables.

An institution that has genuinely built design-led integrity into its assessment architecture has not merely solved an integrity problem. It has done something much more significant: it has built an assessment system that is, for the first time, a reliable signal of genuine graduate capability.

This matters enormously in a world where employers are increasingly sceptical of traditional academic credentials. Where professional accreditors are asking harder questions about what a degree actually means. Where students themselves are asking, with increasing urgency, what they are actually getting for their investment. Where governments are scrutinising the return on higher education at the system level.

An institution whose graduates have been genuinely assessed — through tasks that demanded authentic understanding, verified through real demonstration, developed progressively over time — can make a claim that very few institutions currently can: that the qualification awarded means exactly what it says. That the person holding it can do what it says they can do. That the credential is a reliable signal.

This is not a modest claim. It is, arguably, the most important claim a university can make. And it is currently undermined — systematically, at scale — by assessment architectures that do not demand genuine learning and cannot distinguish the genuine from the fabricated.

Design-led integrity is not the solution to a crisis. It is the architecture of a better university. The crisis just makes the decision urgent.

The institutions that understand this — that are already building toward it, quietly and carefully, in their curriculum rooms and their academic development programmes — are not just solving a problem. They are building something worth having.

That is what design-led integrity actually looks like in practice. Not a policy update. Not a new detection tool. An institution that builds assessment worthy of the learning it is supposed to represent.

Key References and Further Reading

Boud, D., & Soler, R. (2016). Sustainable assessment revisited. Assessment & Evaluation in Higher Education, 41(3), 400–413.

Biggs, J., & Tang, C. (2011). Teaching for Quality Learning at University (4th ed.). Open University Press.

Cavoukian, A. (2009). Privacy by Design: The 7 Foundational Principles. Information and Privacy Commissioner of Ontario.

Dawson, P. (2021). Defending Assessment Security in a Digital World. Routledge.

Deci, E. L., & Ryan, R. M. (2000). The 'what' and 'why' of goal pursuits: Human needs and the self-determination of behavior. Psychological Inquiry, 11(4), 227–268.

Jacobs, J. (1961). The Death and Life of Great American Cities. Random House.

Rose, G. (1985). Sick individuals and sick populations. International Journal of Epidemiology, 14(1), 32–38.

TEQSA (2020). Good Practice Note: Addressing Contract Cheating to Protect the Integrity of Credentials. Tertiary Education Quality and Standards Agency.

TEQSA (2023). Artificial Intelligence and Academic Integrity: Seizing the Moment. Tertiary Education Quality and Standards Agency.

Wiliam, D. (2011). Embedded Formative Assessment. Solution Tree Press.

-

Brigitte Elliott is Co-CEO of Cadmus, an end-to-end assessment platform built for higher education. She writes about the structural design of assessment systems, the conditions that produce learning at scale, and the relationship between institutional architecture and educational quality.

Category

Academic Integrity

Leadership

More News

Load more

Product

How we use data at Cadmus

Being online creates new opportunities to understand how learning happens. Discover how Cadmus uses data to support learning, improve assessment design, and build transparency and trust across every stage of the assessment process.

Cadmus

2026-03-31

Academic Integrity

Leadership

Why detection-first integrity strategies are becoming a sunk cost for universities

In this article, Founder & Co-CEO Herk Kailis puts language to a growing tension across institutions: as the market leans further into detection, the response risks becoming more reactive than strategic. Through a commercial lens, he explores how this is beginning to resemble a classic sunk cost trap, and why the stronger long-term play is to design assessments that are harder to game in the first place.

Herk Kailis, Founder & Co-CEO, Cadmus

2026-03-30

Product update

Company

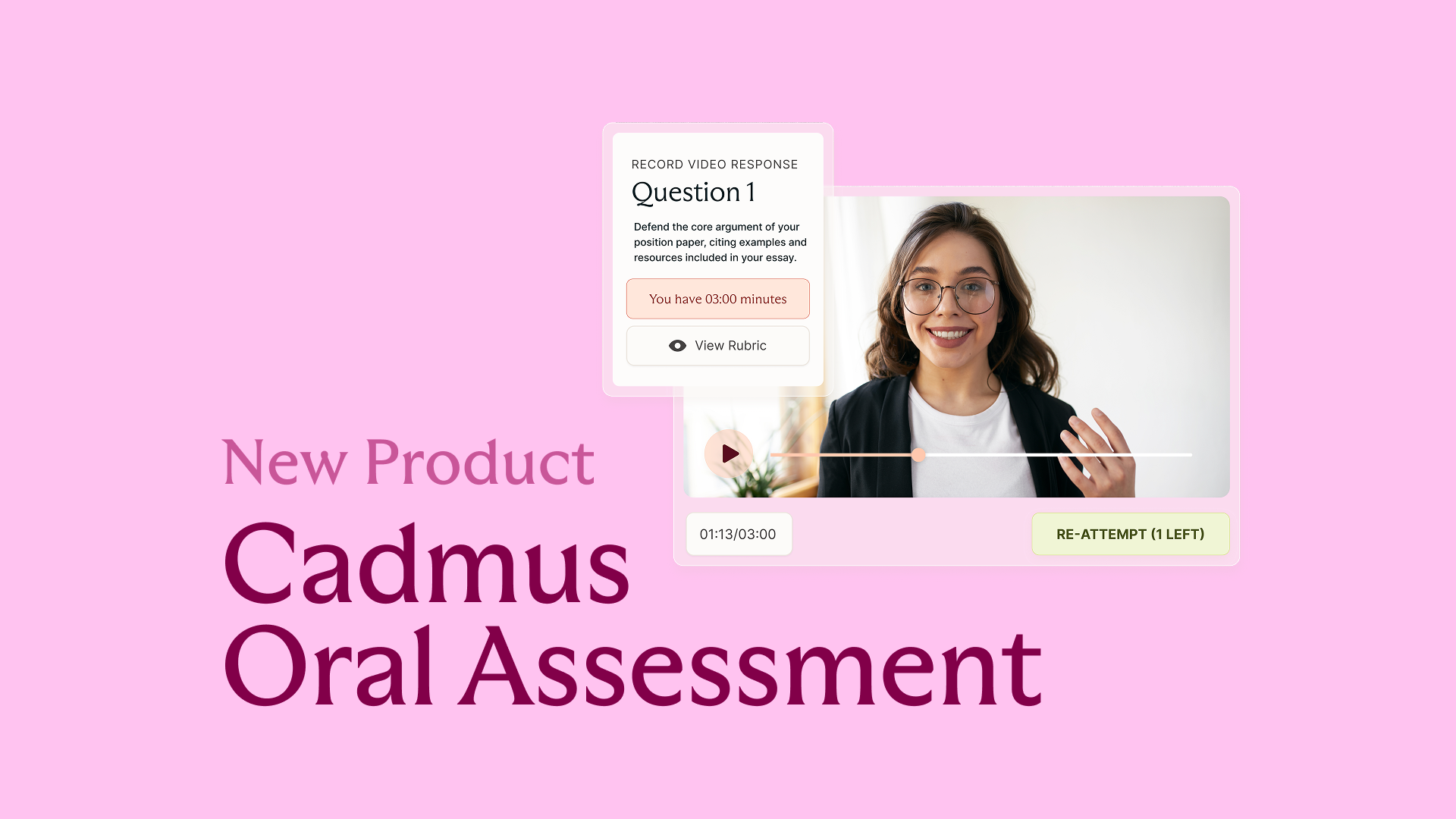

Introducing Cadmus Oral Assessment: Scalable assurance for an AI‑enabled world

Cadmus introduces Oral Assessment: a new approach to strengthening assessment assurance in an AI-enabled world. Starting with scalable, asynchronous vivas, this phased solution embeds targeted oral explanation directly into assessment workflows to provide more credible evidence of student learning.

2026-03-19