News

Why detection-first integrity strategies are becoming a sunk cost for universities

Share with colleagues

Download the full Case Study

Take an in-depth look at how educators have used Cadmus to better deliver assessments.

The arms race nobody can win

There's a concept in business that every operator learns eventually, usually the hard way: sunk cost thinking will quietly bleed you dry.

You've seen it play out. A company invests heavily in a system, a process, a technology. The returns diminish. A better path becomes visible. But instead of pivoting, leadership doubles down—because they've already spent so much. The psychology is understandable. The outcome is almost always the same.

Right now, a significant portion of the higher education sector is sitting in exactly that position. And the investment in question is detection-first academic integrity.

Let me describe a business situation and see if it sounds familiar.

A market player builds a wall to keep bad actors out. They get over it. The player builds a higher wall. They go around it. The player builds a wider wall. The cycle repeats — each iteration more expensive than the last—until the cost of defence exceeds the value being protected.

This is the economics of every arms race, and it's precisely what's happening in AI-enabled academic misconduct.

Universities are spending—and in many cases, significantly increasing spend—on AI detection tools. Turnitin. GPTZero. Originality.ai. The category is now crowded and growing. The marketing is compelling. The peace of mind it promises is real—at least initially.

The problem is that the underlying logic is broken.

AI detection works on a probabilistic basis. It identifies patterns that suggest machine-generated text. But the models it’s trying to detect are constantly being updated—becoming more human in their output with every iteration. Accuracy degrades over time, and false positives aren’t trivial. Multiple studies—from Stanford, MIT, and independent researchers—have shown exactly that. Enough so that some universities now caution against detection as sole evidence in misconduct cases.

You're not buying a solution. You're renting a temporary advantage in a race you’re structurally positioned to lose.

At the Universities Australia Solutions Summit earlier this year, the direction of that race became very clear. University leaders were no longer just discussing AI-generated text. They were discussing AI-enabled wearables—covert devices designed to feed answers to students in real time during supervised examinations. The screen has been circumvented. The battle has moved to the body. If that doesn’t illustrate the futility of detection-first thinking, I’m not sure what does.

What business learned—and when

The analogy I keep returning to is digital rights management in the music industry.

In the late 1990s and early 2000s, the major labels responded to digital piracy with DRM—technological locks designed to prevent unauthorised copying of music. They invested heavily. They litigated aggressively. They built walls.

Consumers found their way around those walls within weeks of each new iteration. The technology arms race was expensive, it frustrated legitimate users, and it failed to stop determined bad actors. By 2009, Apple had abandoned DRM on iTunes. The labels followed. The whole apparatus was dismantled not because piracy disappeared, but because the industry finally asked a different question: what if we made the legitimate experience so good that circumvention wasn't worth the effort?

Spotify didn't defeat piracy through detection. It defeated piracy through design.

This is not an obscure business lesson. In every mature industry, the shift is the same: from inspecting problems at the end, to designing them out at the start. Deming built an entire philosophy on it. Six Sigma is built on it. Cybersecurity is now built on it.

Higher education isn’t an exception to this principle. It's just a very expensive version of it.

The real cost is not what's on the invoice

Here's what I find striking in conversations with university leaders: the real cost of detection isn't financial. The financial cost is real, but it's manageable. The deeper cost is strategic.

When a university organises its integrity response around detection, it makes a series of downstream decisions — in policy, in staff training, in academic culture, in student communication — that are all predicated on the same logic. Find it. Catch it. Penalise it. Over time, the entire integrity infrastructure gets built around this assumption, and the organisation's ability to ask a different question starts to erode.

The different question is: what if we designed assessments that couldn't be meaningfully gamed in the first place?

This is not a radical or untested idea. Assessment scholars like David Boud, Phillip Dawson, and Cath Ellis have been making this argument for years—long before generative AI made it urgent. The research on authentic, performance-based, and contextualised assessment is substantial. The tools to operationalise it at scale now exist. What's been missing, in many institutions, is the commercial logic to justify the transition.

That logic now exists in abundance.

The economics of the alternative

Let me put this in terms any provost, DVC or CFO can model.

Detection-first integrity requires ongoing investment in tools that depreciate in effectiveness over time. It generates a significant administrative burden in investigation and adjudication. It exposes institutions to legal and reputational risk through false positives. It creates an adversarial dynamic with students—undermining the trust universities depend on for retention, reputation, and advocacy.

Design-led integrity—building assessments that require genuine demonstration of learning, that are contextualised, personalised, and iterative—has a different cost profile. The upfront investment is real: in curriculum redesign, in staff capability development, in the platforms that make it operationally viable. But the marginal cost of each subsequent assessment cycle decreases. The administrative burden of investigation drops. The false positive problem disappears. And critically, the assessment itself becomes a stronger signal of actual graduate capability—which is, ultimately, what employers are paying attention to and what accrediting bodies are increasingly demanding.

This is what a pivot from a sunk cost looks like. Not abandonment—you don't stop monitoring your systems entirely—but a deliberate reallocation of investment from detection to design, with the full understanding that the returns on detection are declining and the returns on design are compounding.

A word on timing

I am not suggesting this transition is simple. Academic change management is slow for structural reasons—institutional governance, academic autonomy, resourcing constraints—and those reasons are not illegitimate. The institutions that are already moving in this direction are doing so carefully, with strong faculty engagement, supported by evidence.

One of the most telling signals at Universities Australia was the quiet return of oral assessment. Vivas and short oral follow-ups attached to written submissions are re-emerging—not as nostalgia, but as a practical design choice. When students know they will be asked to explain their own work, they tend to actually do it. That one design decision dissolves an enormous amount of the misconduct problem without a single detection tool, a single investigation, or a single misconduct finding.

But timing matters in every market. The institutions that are asking the design question now—while the detection market is still crowded and confident— will be positioned very differently in five years than those that are still waiting for a detection tool good enough to make the problem go away.

That tool is not coming. The market will not solve this problem through better detection. The underlying technology asymmetry is too steep, and it favours the adversary.

The question worth asking isn't whether to shift. It’s how long you’re willing to keep investing in something that’s structurally losing.

-

Herk Kailis is Founder and Co-CEO of Cadmus, an assessment platform built for higher education. He writes about the commercial logic of learning design, institutional strategy, and how universities can build systems that work better than the problems they're trying to solve.

Category

Academic Integrity

Leadership

More News

Load more

Product update

Company

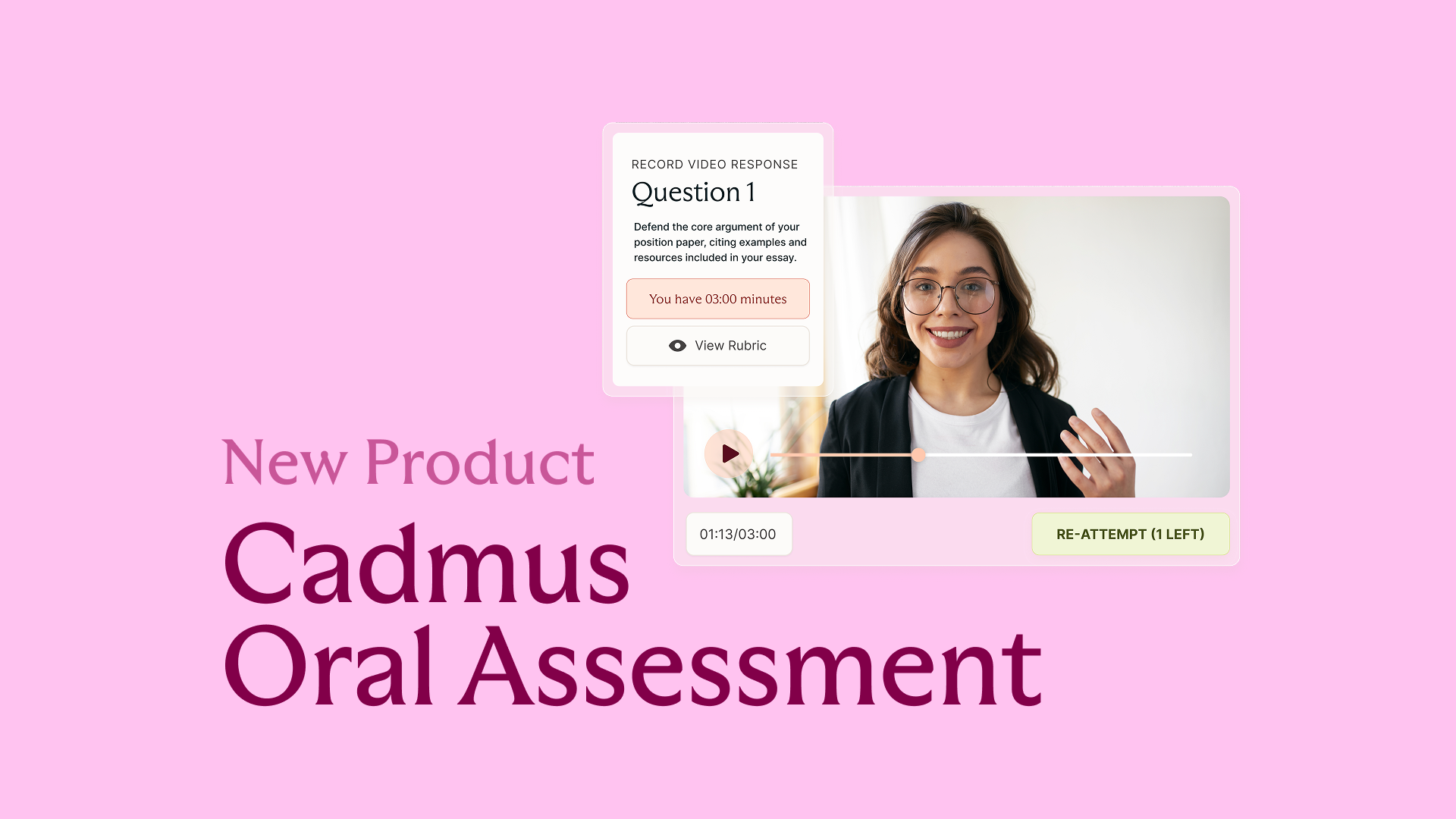

Introducing Cadmus Oral Assessment: Scalable Assurance for an AI‑Enabled World

Cadmus introduces Oral Assessment: a new approach to strengthening assessment assurance in an AI-enabled world. Starting with scalable, asynchronous vivas, this phased solution embeds targeted oral explanation directly into assessment workflows to provide more credible evidence of student learning.

2026-03-19

Assessment Design

Student Success

Group work is not the problem. Poor design is.

This article explores how challenges in group work are often linked to assessment design, rather than student behaviour. It highlights how clearer roles, structured milestones, and greater visibility of contributions can improve fairness and outcomes, while better supporting both students and educators throughout the process.

Cadmus

2026-03-13

Student Success

Assessment Design

The hidden lever for student success: Assessment design

Assessments shape how students learn: where they focus their effort, how they approach research and writing, and how confidently they demonstrate their knowledge. When assessment is designed to support learning, not just measure it, students are far better positioned to succeed. Here’s how universities are rethinking assessment design to improve engagement, strengthen integrity, and support better student outcomes.

Cadmus

2026-03-13