News

Why universities agree with design-led integrity—but still struggle to move beyond detection

Share with colleagues

Download the full Case Study

Take an in-depth look at how educators have used Cadmus to better deliver assessments.

One pattern shows up in every market I’ve worked in: the gap between what organisations say they believe and what they actually buy is where markets stall. It’s rarely a knowledge gap. It’s an organisational one. And once you’ve seen it enough times, you start to notice it everywhere.

I see it everywhere I look in higher education right now.

Ask any DVC-Academic whether they believe detection-first integrity strategies are sufficient in an AI-enabled world. Almost none of them will say yes. The research is clear, the regulators have weighed in, and frankly the evidence is in front of them every day. They know detection is losing. They know assessment design is the answer. They will tell you this quite openly.

And then they renew their Turnitin contract.

I don’t say that to be glib. I say it because I’ve seen this exact dynamic in every market I’ve worked in, and understanding why it happens is the only way to move past it.

The Procurement Trap

In SaaS, we have a name for this. We call it the incumbent advantage—and it has nothing to do with product quality. The incumbent wins renewal not because it’s the best solution, but because it’s the path of least resistance. It sits inside existing procurement frameworks. It has a known budget line. It requires no internal change management, no new workflows, no convincing of colleagues who weren’t in the room when the decision was made. It’s administratively easier to keep what already exists.

Detection tools fit this profile perfectly. They plug into existing LMS infrastructure. They produce a report. They give someone a number they can put in a policy document. They create the appearance of action without requiring any change to the underlying system. For an institution under pressure—enrolment volatility, staff capacity constraints, regulatory scrutiny—that appearance of action is genuinely valuable. I understand the appeal. I just don’t think it survives contact with the actual problem.

Because here is what detection tools cannot do: they cannot protect your institution’s reputation when the story breaks.

The Risk Your Board Is Not Pricing

Let me put this in terms that belong in a risk register, because I think that’s where this conversation needs to happen.

Academic credential fraud—at scale, enabled by AI, undetected by your current tools—is a reputational event waiting for a journalist. It only takes one visible failure for this to become a board-level issue. And when it happens, the question your Vice-Chancellor will be asked is not “did you have a detection tool?” The question will be “did you know this was happening, and what did you do about the system that produced it?”

Detection gives you a partial answer to the first question. It gives you nothing for the second.

I’ve watched this risk calculus play out in other industries. Financial services, healthcare, data privacy — every sector has had its version of the moment when the compliance-first, detection-based approach proved insufficient. The pattern is consistent. The timing is always a surprise. The outcome is always predictable in retrospect.

Higher education is not exempt from this dynamic. It is approaching it.

The Organisational Inertia Problem

So why doesn’t the sector move faster? There are three forces operating simultaneously.

The first is the visibility problem. Detection produces metrics—numbers of detections, numbers of cases, numbers of outcomes. These metrics can be reported upward, put in annual reports, presented to Academic Board as evidence of a functioning integrity system. Design-led assessment, by contrast, produces an absence of problems. The absence of problems is hard to count, hard to credit, and politically difficult to claim as a win. Most organisations are better at funding visible activity than valuing invisible prevention.

The second is change management debt. Moving from detection-first to design-led is not a procurement decision. It is a curriculum decision, a technology decision, a staff capability decision, and a governance decision simultaneously. For an institution that has built its entire integrity infrastructure around detection, the switching cost looks enormous—not because it is properly accounted for, but because the costs of change are immediate and visible while the benefits are distributed and slow. This is where a lot of otherwise sensible decisions stall.

The third is the language barrier. Design-led integrity lives in the vocabulary of learning science and curriculum theory. The people who understand it best are not, typically, the people sitting on investment committees or budget approval processes. The people who control those decisions speak a different language—risk, ROI, reputational exposure, competitive positioning. Until this conversation is translated into that language and taken into those rooms, the gap between belief and behaviour will persist.

The Platform Moment

Here’s the commercial reality that I think is underappreciated in this sector.

Assessment in higher education is going through the same transition that CRM went through in the early 2000s, that HRIS went through in the 2010s, that every complex enterprise workflow goes through eventually: from a fragmented collection of point solutions to an integrated platform. That transition always follows the same arc. First, specialists emerge for each part of the problem. Then, the cost of integrating those specialists—in procurement, in data management, in staff time—becomes unsustainable. Then, the market consolidates around the platforms that can hold the whole workflow.

That moment is arriving in assessment. Institutions are actively simplifying their technology stacks. They are looking for coherent ecosystems rather than collections of tools. And critically, they are looking for platforms that are aligned—with TEQSA, with the accreditation frameworks, with the direction of regulatory travel—because in a sector where regulatory risk is real, being on the wrong technology at the wrong moment is an institutional liability.

This is the context in which platforms like Cadmus become more relevant: not as another point solution, but as infrastructure aligned with where assessment is already heading. Not because we say so, but because the regulatory framework and the research base point in the same direction we do. That kind of alignment is hard to replicate quickly, and it matters more as the market consolidates.

The window for that bet is now. Markets consolidate faster than institutions expect. The institutions that move earlier usually get more choice, lower switching pain, and more influence over how the model lands internally. The ones that wait will face a harder, costlier transition in a less forgiving environment.

What I’d Say to a Vice-Chancellor

I’ve sat across the table from a lot of enterprise buyers over the years. When I meet a university leader who tells me they believe in design-led integrity but hasn’t moved yet, I hear something specific: they haven’t yet connected the intellectual conviction to the institutional risk, and they haven’t seen a path to implementation that feels manageable.

The intellectual case is won. The research is settled, the regulators have spoken, and the technology arms race is visibly losing. What remains is the organisational case: that the cost of inaction is higher than it looks, that the cost of transition is lower than it feels, and that the window for a deliberate, well-supported shift is open now in a way it will not be indefinitely.

The institutions that understand what is at stake here are not choosing between detection and design because one is cheaper or easier. They’re choosing based on what they believe a university education should actually produce —and they’re not willing to let procurement convenience get in the way.

That’s a decision worth making. And it’s one I’d like to help more institutions make.

-

Nick Bareham is Chief Growth Officer at Cadmus. He has spent his career scaling revenue organisations from Series A to Series D across complex enterprise markets. He writes about institutional change, the commercial logic of platform decisions, and what the SaaS playbook reveals about the choices higher education is navigating now.

Category

Academic Integrity

Leadership

More News

Load more

Product

How we use data at Cadmus

Being online creates new opportunities to understand how learning happens. Discover how Cadmus uses data to support learning, improve assessment design, and build transparency and trust across every stage of the assessment process.

Cadmus

2026-03-31

Academic Integrity

Leadership

Why detection-first integrity strategies are becoming a sunk cost for universities

In this article, Founder & Co-CEO Herk Kailis puts language to a growing tension across institutions: as the market leans further into detection, the response risks becoming more reactive than strategic. Through a commercial lens, he explores how this is beginning to resemble a classic sunk cost trap, and why the stronger long-term play is to design assessments that are harder to game in the first place.

Herk Kailis, Founder & Co-CEO, Cadmus

2026-03-30

Product update

Company

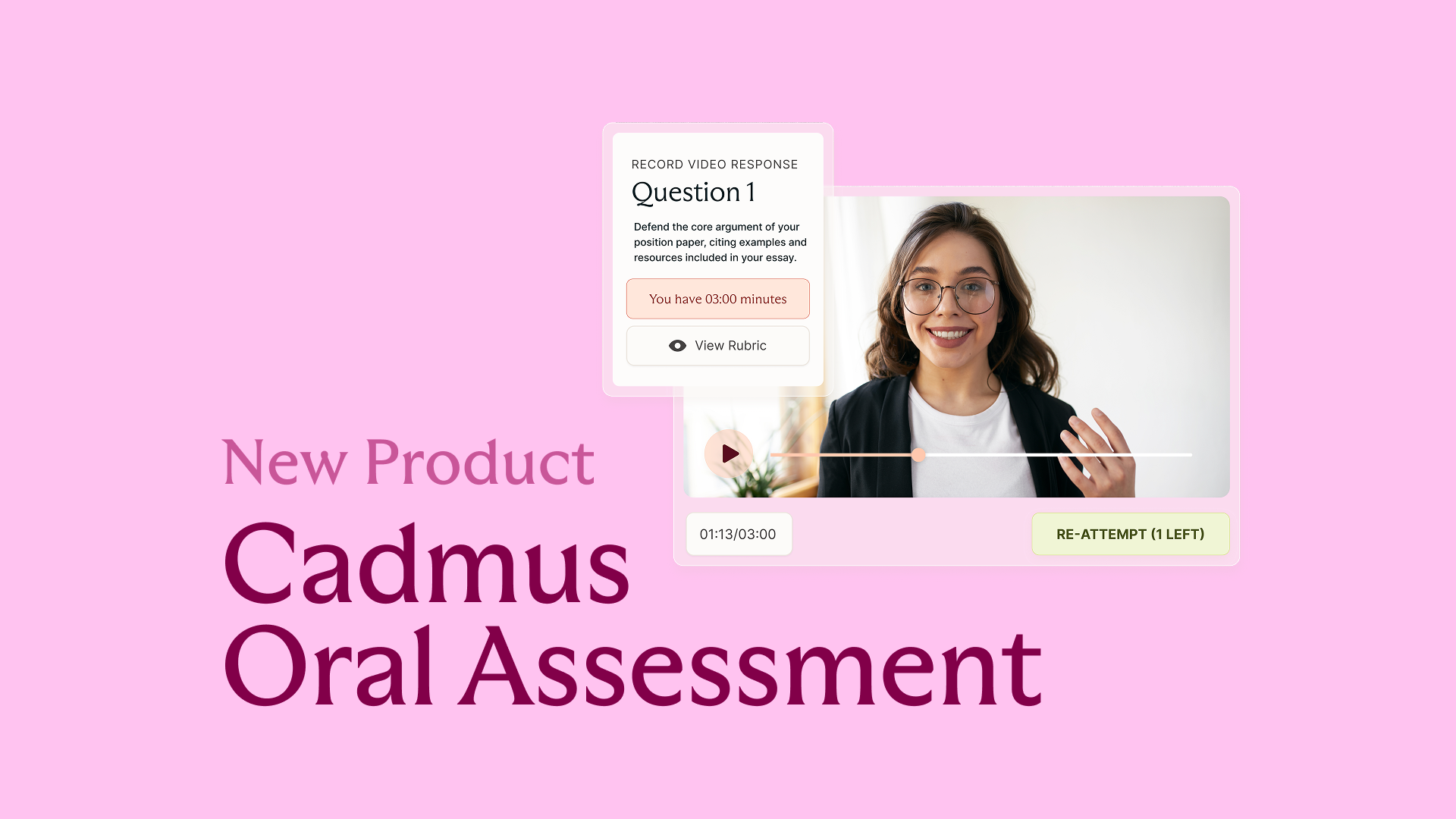

Introducing Cadmus Oral Assessment: Scalable assurance for an AI‑enabled world

Cadmus introduces Oral Assessment: a new approach to strengthening assessment assurance in an AI-enabled world. Starting with scalable, asynchronous vivas, this phased solution embeds targeted oral explanation directly into assessment workflows to provide more credible evidence of student learning.

2026-03-19